What is an AI Agent and How to Build One

1 Nov 2025, 3:30 pm

Last Updated: 8 Nov 2025, 5:30 pm

Author: Satheesh Challa

Understanding AI Agents: The Foundation of Intelligent Systems

AI agents are rapidly becoming the cornerstone of modern artificial intelligence applications. From virtual assistants like Siri and Alexa to sophisticated autonomous systems making complex decisions, AI agents are transforming how we interact with technology. But what exactly is an AI agent, and how can you build one?

This comprehensive guide will take you from the fundamentals of AI agents to building your own intelligent system. Whether you're a developer looking to integrate AI into your applications or a business leader seeking to understand this transformative technology, this guide provides the knowledge and practical steps you need to get started with AI agents.

What is an AI Agent? Defining Intelligence in Software

An AI agent is a software entity that perceives its environment through sensors (or inputs), makes decisions based on that perception, and takes actions to achieve specific goals. Unlike traditional software that follows fixed rules, AI agents can adapt, learn, and make autonomous decisions in complex, dynamic environments.

Core Characteristics of AI Agents

- Autonomy - AI agents operate independently without constant human intervention. They can make decisions and take actions based on their programming and learned experiences, functioning continuously to achieve their objectives.

- Reactivity - Agents perceive and respond to changes in their environment in real-time. They can detect environmental changes and react appropriately, whether it's a user query, sensor data, or system events.

- Proactivity - Beyond reactive behavior, agents can take initiative to achieve goals. They don't just respond to stimuli; they actively pursue objectives and plan sequences of actions.

- Social Ability - Many AI agents can interact with other agents or humans through various communication protocols, enabling collaboration and coordination.

- Learning Capability - Advanced agents improve their performance over time by learning from experience, adapting to new situations, and refining their decision-making processes.

The power of AI agents lies in their ability to combine these characteristics. A truly intelligent agent doesn't just follow scripts—it perceives, reasons, adapts, and acts in ways that maximize its chances of achieving defined goals, even in unpredictable environments.

Types of AI Agents: A Comprehensive Classification

AI agents exist on a spectrum from simple reactive systems to complex learning entities. Understanding these different types helps you choose the right architecture for your specific use case and guides your development approach.

The Five Categories of AI Agents

- Simple Reflex Agents - The most basic type, these agents select actions based solely on current percepts, ignoring the rest of the percept history. They operate on condition-action rules (if-then statements). While simple and fast, they struggle with partially observable environments.

- Model-Based Reflex Agents - These agents maintain an internal state to track aspects of the world that aren't currently observable. They use a model of how the world works to update their internal state based on actions and percepts.

- Goal-Based Agents - Beyond simply reacting, these agents consider future consequences of actions and choose behaviors that achieve specific goals. They use search and planning algorithms to find sequences of actions leading to desired outcomes.

- Utility-Based Agents - These sophisticated agents don't just aim for goals—they optimize for the best outcome. They use utility functions to measure how desirable different states are and make decisions that maximize expected utility.

- Learning Agents - The most advanced type, learning agents improve their performance over time through experience. They have components for learning, performance evaluation, and exploration of new behaviors.

Modern AI applications often combine multiple agent types. For example, a customer service chatbot might use goal-based reasoning for task completion, utility-based decision making for prioritization, and learning capabilities to improve from interactions.

AI Agent Types Overview

| S.No | Type | Description | Example | ||||

|---|---|---|---|---|---|---|---|

| 1 | Simple Reflex Agents | React to current percepts based on condition-action rules | Thermostat, basic chatbot | Simple Reflex Agents React to current percepts based on condition-action rules Example: Thermostat, basic chatbot | |||

| 2 | Model-Based Agents | Maintain internal state to track aspects of the world | Navigation systems, game AI | Model-Based Agents Maintain internal state to track aspects of the world Example: Navigation systems, game AI | |||

| 3 | Goal-Based Agents | Make decisions to achieve specific goals | Route planners, task schedulers | Goal-Based Agents Make decisions to achieve specific goals Example: Route planners, task schedulers | |||

| 4 | Utility-Based Agents | Maximize utility function to make optimal decisions | Recommendation systems, trading bots | Utility-Based Agents Maximize utility function to make optimal decisions Example: Recommendation systems, trading bots | |||

| 5 | Learning Agents | Improve performance over time through experience | AI assistants, adaptive systems | Learning Agents Improve performance over time through experience Example: AI assistants, adaptive systems | |||

The Architecture of AI Agents: Key Components

Building an effective AI agent requires understanding its fundamental architecture. While implementations vary, most AI agents share common structural components that work together to create intelligent behavior.

Essential Agent Components

- Perception Module - Receives and processes inputs from the environment. This could be sensor data, API responses, user queries, or system events. The perception module transforms raw data into a format the agent can reason about.

- Knowledge Base - Stores information about the world, domain knowledge, past experiences, and learned patterns. This can range from simple rule sets to complex neural networks or vector databases.

- Reasoning Engine - The core decision-making component that processes perceptions, accesses knowledge, and determines appropriate actions. In LLM-based agents, this is typically the language model performing inference.

- Action Module - Executes decisions by interacting with the environment. Actions might include API calls, database operations, generating responses, or controlling physical actuators.

- Learning Component - For adaptive agents, this component updates the knowledge base and improves decision-making based on feedback and experience.

- Memory System - Maintains context across interactions. Short-term memory handles immediate context, while long-term memory stores important information for future use.

Modern AI agents, especially those powered by Large Language Models, often implement these components through prompts, tool integrations, and specialized frameworks. The architecture can be implemented with varying levels of sophistication depending on your requirements.

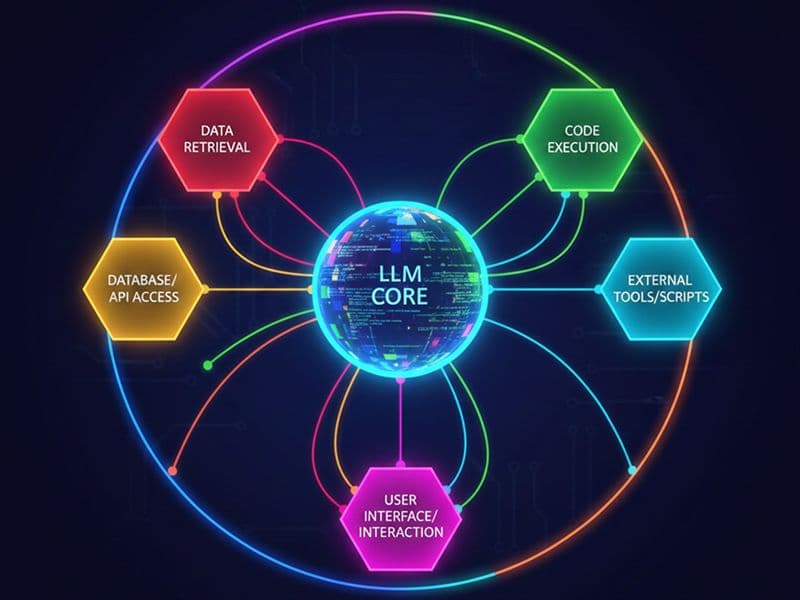

LLM-Powered Agents: The Modern Approach

The emergence of Large Language Models (LLMs) like GPT-4, Claude, and Gemini has revolutionized AI agent development. These models provide powerful reasoning capabilities, natural language understanding, and the ability to follow complex instructions—making them ideal foundations for intelligent agents.

Why LLMs Excel as Agent Brains

- Natural Language Processing - LLMs understand and generate human language naturally, enabling intuitive human-agent interaction without complex NLP engineering.

- Reasoning Capabilities - Modern LLMs can break down complex problems, plan sequences of actions, and make logical inferences—essential for agent autonomy.

- Tool Use - LLMs can be taught to use external tools through function calling or tool APIs, dramatically extending agent capabilities beyond text generation.

- Few-Shot Learning - Agents can be configured for new tasks through examples and instructions rather than extensive retraining.

- Broad Knowledge - Pre-trained on vast text corpora, LLMs bring extensive world knowledge to agent applications.

LLM-powered agents typically follow a "perception-reasoning-action" loop: they receive input (perception), use the LLM to interpret and decide (reasoning), and execute appropriate actions through tool calls or API integrations. This pattern has become the dominant paradigm for building modern AI agents.

Building Your First AI Agent: A Step-by-Step Guide

Let's walk through building a practical AI agent from scratch. We'll create a research assistant agent that can search the web, analyze information, and provide synthesized answers to complex questions.

Step 1: Define Your Agent's Purpose and Capabilities

Before writing any code, clearly define what your agent will do. For our research assistant:

- Primary Goal: Answer user questions with accurate, well-researched information

- Key Capabilities: Web search, information synthesis, source citation

- Constraints: Must cite sources, admit when uncertain, avoid making up information

Step 2: Choose Your Technology Stack

Select the right tools and frameworks for your agent. For our example:

- LLM Provider - Choose between OpenAI (GPT-4), Anthropic (Claude), Google (Gemini), or open-source models. Consider cost, capabilities, and latency.

- Framework - Use LangChain, LlamaIndex, or build from scratch. Frameworks accelerate development but add dependencies.

- Tools - Integrate search APIs (Google, Bing, SerpAPI), web scraping, document processing, and any domain-specific tools.

- Memory - Decide between in-memory state, databases, or vector stores for context management.

Step 3: Design Your Agent's Prompt

The system prompt is crucial for LLM-based agents. It defines behavior, capabilities, and constraints:

- Define the agent's role and expertise clearly

- List available tools and when to use them

- Specify output format and style requirements

- Include examples of good behavior (few-shot learning)

- Add safety guidelines and ethical constraints

Step 4: Implement the Agent Loop

Create the core agent execution cycle:

- Receive user input and add it to conversation history

- Send prompt + history + available tools to LLM

- Parse LLM response for tool calls or final answers

- Execute any requested tool calls and collect results

- Send tool results back to LLM for synthesis

- Return final response to user

- Update memory/context for future interactions

Step 5: Add Tools and Capabilities

Implement or integrate tools that extend your agent's capabilities:

- Web search for finding current information

- Document readers for processing PDFs, Word files, etc.

- Calculators for mathematical operations

- API integrations for specialized data sources

- Database queries for accessing structured data

Essential Tools and Frameworks for AI Agent Development

The AI agent ecosystem offers numerous frameworks and libraries that accelerate development. Choosing the right tools depends on your use case, technical requirements, and team expertise.

Popular Agent Frameworks

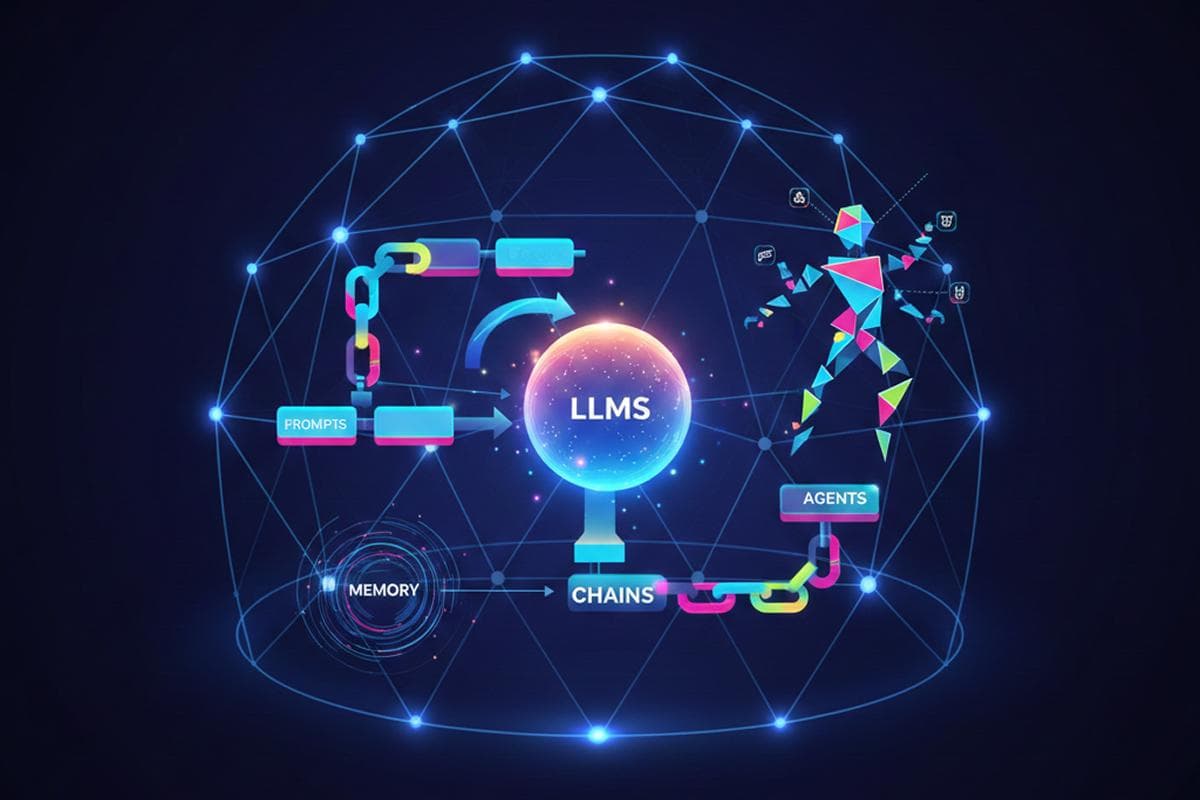

- LangChain / LangGraph - Comprehensive framework for building LLM applications with extensive tool integrations, memory management, and agent templates. Great for rapid prototyping and production applications.

- LlamaIndex - Specializes in data integration and retrieval for AI agents. Excellent for building agents that work with custom documents and databases.

- AutoGPT / BabyAGI - Frameworks for autonomous agents that can plan and execute multi-step tasks with minimal guidance.

- CrewAI - Focused on multi-agent collaboration, enabling teams of specialized agents to work together on complex tasks.

- Microsoft Semantic Kernel - Enterprise-focused framework with strong C# and Python support, ideal for integrating AI into existing systems.

Supporting Tools and Services

- Vector Databases: Pinecone, Weaviate, Chroma for semantic search and memory

- Observability: LangSmith, Weights & Biases for monitoring and debugging

- Prompt Management: PromptLayer, Helicone for version control and testing

- Deployment: Modal, Replicate, or cloud platforms for hosting agents

Best Practices for Building Reliable AI Agents

Building AI agents that work reliably in production requires more than just connecting to an LLM API. Follow these best practices to create robust, maintainable agent systems.

Design Principles

- Start Simple - Begin with a minimal viable agent and add complexity incrementally. It's easier to expand a working simple agent than debug a complex one.

- Clear Instructions - Write explicit, detailed prompts. Assume nothing is obvious to the LLM. Test and refine prompts iteratively.

- Bounded Autonomy - Set clear limits on what your agent can do. Implement approval workflows for high-stakes actions.

- Graceful Degradation - Plan for failures. What happens when tools fail, APIs are down, or the LLM produces unexpected output?

- Human in the Loop - For critical applications, keep humans involved in decision-making, especially for high-impact actions.

Implementation Best Practices

- Comprehensive Logging - Log all inputs, LLM responses, tool calls, and outputs. This is invaluable for debugging and improving your agent.

- Error Handling - Implement robust error handling for API failures, parsing errors, and unexpected behaviors. Have fallback strategies.

- Rate Limiting - Protect against runaway costs with rate limits on LLM calls, tool executions, and overall agent actions.

- Testing Strategy - Create test suites with diverse scenarios including edge cases, adversarial inputs, and common failure modes.

- Version Control - Track changes to prompts, tool configurations, and agent logic. Prompts are code—treat them accordingly.

- Cost Monitoring - Track LLM API costs per interaction, per user, and per feature. Set up alerts for unusual spending.

Common Challenges and Solutions

Building AI agents comes with unique challenges. Understanding these pitfalls and their solutions will save you time and frustration.

Challenge 1: Hallucinations and Accuracy

LLMs can generate plausible-sounding but incorrect information.

- Use tools to verify facts from reliable sources

- Implement confidence scoring and uncertainty handling

- Ground responses in retrieved documents (RAG)

- Add review mechanisms for critical outputs

Challenge 2: Context Window Limitations

Conversations and task histories can exceed token limits.

- Implement smart summarization of conversation history

- Use vector stores for semantic retrieval of relevant context

- Prioritize recent and important information

- Consider models with larger context windows for complex tasks

Challenge 3: Unpredictable Behavior

Agents can take unexpected actions or misinterpret instructions.

- Start with constrained action spaces and expand carefully

- Use structured output formats (JSON) for parsing reliability

- Implement validators for agent actions before execution

- Test extensively with diverse inputs and scenarios

Challenge 4: Cost Management

LLM API costs can escalate quickly with agent applications.

- Cache repeated queries and tool results

- Use smaller, cheaper models for simple tasks

- Implement efficient prompt engineering to reduce tokens

- Set hard limits and budgets per user or session

Real-World Applications of AI Agents

AI agents are transforming industries and workflows across the board. Understanding these applications can inspire your own agent implementations and demonstrate the technology's versatility.

Business Applications

- Customer Support - Intelligent chatbots that understand context, access knowledge bases, escalate to humans when needed, and learn from interactions.

- Sales Assistants - Agents that qualify leads, answer product questions, schedule demos, and personalize outreach based on customer data.

- Data Analysis - Agents that query databases, generate visualizations, identify trends, and provide natural language insights from complex data.

- Content Creation - Multi-agent systems that research topics, write drafts, edit for quality, and optimize for SEO.

Technical Applications

- Code Assistants - Agents that understand codebases, suggest improvements, write tests, and help debug issues.

- DevOps Automation - Agents that monitor systems, diagnose problems, suggest fixes, and execute approved remediation actions.

- Research Assistants - Agents that search academic papers, summarize findings, identify research gaps, and generate literature reviews.

- Testing Automation - Agents that generate test cases, identify edge cases, and create comprehensive test suites.

Personal Productivity

- Email management and intelligent triage

- Meeting schedulers that handle complex constraints

- Personal knowledge managers that organize and retrieve information

- Learning companions that adapt to individual study styles

Testing and Evaluating AI Agents

Unlike traditional software, AI agents require specialized testing approaches due to their non-deterministic nature. Comprehensive evaluation ensures your agent performs reliably before deployment.

Testing Strategies

- Unit Testing - Test individual components like tool integrations, prompt templates, and parsers. These can use traditional unit testing frameworks.

- Integration Testing - Test complete agent workflows end-to-end with various inputs. Verify that all components work together correctly.

- Behavioral Testing - Define expected behaviors for different scenarios and verify the agent acts appropriately. Focus on intent rather than exact outputs.

- Adversarial Testing - Try to break your agent with edge cases, contradictory instructions, prompt injections, and malicious inputs.

- A/B Testing - Compare different prompt versions, models, or configurations with real usage data to identify improvements.

Key Metrics to Track

- Task Success Rate: Percentage of tasks completed correctly

- Response Quality: Accuracy, relevance, and helpfulness of outputs

- Latency: Time to generate responses

- Cost per Interaction: Average LLM and tool costs

- Error Rate: Frequency of failures and incorrect behaviors

- User Satisfaction: Feedback and ratings from users

Ethical Considerations and Safety

As AI agents become more autonomous and capable, ethical considerations and safety measures become increasingly important. Building responsible AI agents requires proactive attention to potential harms.

Safety Guidelines

- Transparency - Make it clear when users are interacting with an AI agent. Don't deceive users about the agent's capabilities or limitations.

- Bias Mitigation - Test for and address biases in agent behavior. Ensure fair treatment across different user groups.

- Privacy Protection - Handle user data responsibly. Implement data minimization and secure storage practices.

- Action Boundaries - Limit agent actions to safe, approved operations. Require human approval for high-stakes decisions.

- Monitoring - Continuously monitor agent behavior for unexpected or harmful patterns. Have mechanisms to quickly disable problematic agents.

The Future of AI Agents

AI agent technology is evolving rapidly. Understanding emerging trends helps you future-proof your implementations and identify new opportunities.

Emerging Trends

- Multi-Modal Agents - Agents that work with text, images, audio, and video, enabling richer interactions and capabilities.

- Improved Reasoning - Better planning, long-term memory, and multi-step reasoning through techniques like chain-of-thought and tree search.

- Agent Collaboration - Frameworks for multiple specialized agents working together, similar to human teams.

- Embodied Agents - AI agents controlling robots and physical systems, bridging digital and physical worlds.

- Continuous Learning - Agents that improve from every interaction without manual retraining.

The convergence of better models, more sophisticated frameworks, and increased compute availability is making AI agents more capable and accessible. We're moving from agents as experimental prototypes to agents as production infrastructure.

Conclusion: Your Journey with AI Agents Begins Now

AI agents represent one of the most exciting frontiers in artificial intelligence. From simple chatbots to sophisticated autonomous systems, agents are transforming how we interact with technology and automate complex workflows.

Building your first AI agent doesn't require extensive AI expertise—modern frameworks and LLMs have democratized agent development. Start with a clear use case, leverage existing tools and frameworks, and iterate based on real-world testing. Focus on reliability, safety, and user value rather than maximizing autonomy.

As you gain experience, you'll develop intuition for prompt engineering, tool integration, and agent architecture. The field is evolving rapidly, so continuous learning and experimentation are essential. Whether you're building customer service bots, research assistants, or automation tools, the principles and practices outlined in this guide will serve as your foundation. The age of AI agents is here—it's time to build.

Similar Blogs

What is an AI Agent and How to Build One

Understand AI agent architecture, learn how autonomous systems make decisions, and follow practical steps to build intelligent agents using LLM frameworks.

Top 5 Frameworks for Building AI agents

Review the top 5 AI agent frameworks: LangChain, CrewAI, AutoGPT, Semantic Kernel, and LlamaIndex. Compare features, strengths, and ideal use cases.

Cost Optimization Strategies for AWS

Cut AWS cloud costs with proven optimization strategies: EC2 right-sizing, Reserved & Spot Instances, S3 storage management, auto-scaling, and Savings Plans.